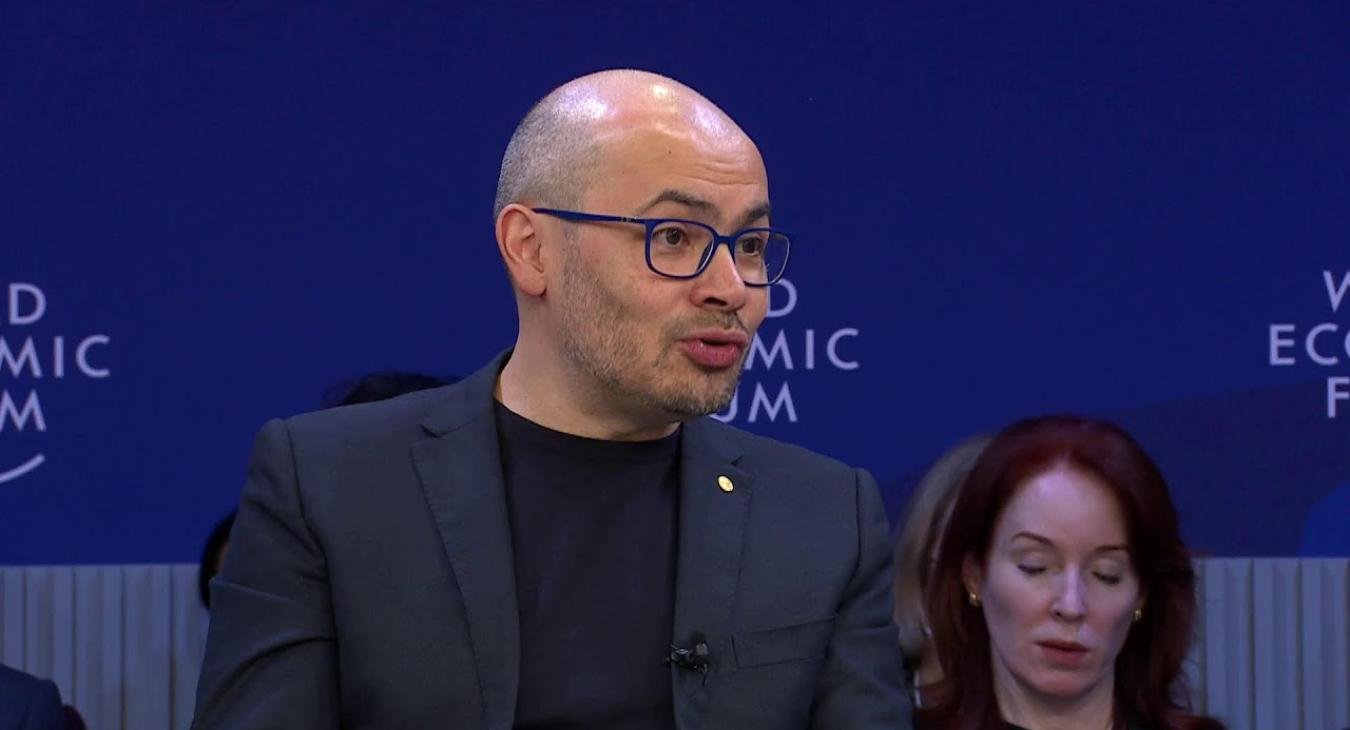

This video features a discussion between Demis Hassabis (CEO of Google DeepMind) and Dario Amodei (CEO of Anthropic) at the World Economic Forum, exploring the rapid progression toward Artificial General Intelligence (AGI).

Background/Context

The video captures a high-level dialogue between the leaders of two of the world’s most prominent AI labs, placing the current AI revolution within the context of a "technological adolescence" for humanity. It serves as a sequel to their previous discussions, evaluating how much closer the world has moved to AGI in the past year.

Problem/Purpose

The central purpose is to define the timeline for AGI and address the critical gap between rapid technological advancement and society's ability to manage the resulting risks. The conversation focuses on whether the "loop" of AI development can be closed—where AI begins to build and improve itself—and how to navigate the economic and geopolitical instability this might cause.

Results/Key Findings

- Accelerated Timelines: Amodei suggests that models performing at a "Nobel laureate level" across many fields could arrive as early as 2026–2027, while Hassabis maintains a roughly 50% chance of AGI by the end of the decade.

- The Self-Improvement Loop: A critical driver of speed is AI’s growing ability to write code and conduct AI research, which could create an exponential feedback loop in model development.

- Labor Displacement: There is a consensus that entry-level white-collar jobs are at immediate risk, with significant disruption expected within the next one to five years.

- Geopolitical Friction: The speakers discuss the tension of competing with "geopolitical adversaries" like China, which makes it difficult for Western companies to slow down development for safety reasons, though they advocate for measures like chip export controls.

Conclusion/Implications

The speakers conclude that while the upsides of AGI for science and medicine are immense, the risks—including bioterrorism, autonomous system misalignment, and loss of human meaning—require an urgent "battle plan." They emphasize that the next few years are a critical window where international cooperation and minimum safety standards must be established to ensure humanity survives its transition into an AGI-driven era.

Read more articles

- Log in to post comments